Join Our Community

Get the earliest access to hand-picked content weekly for free.

Spam-free guaranteed! Only insights.

Join Our Community

Get the earliest access to hand-picked content weekly for free.

Spam-free guaranteed! Only insights.

🎯 Quick Impact Summary

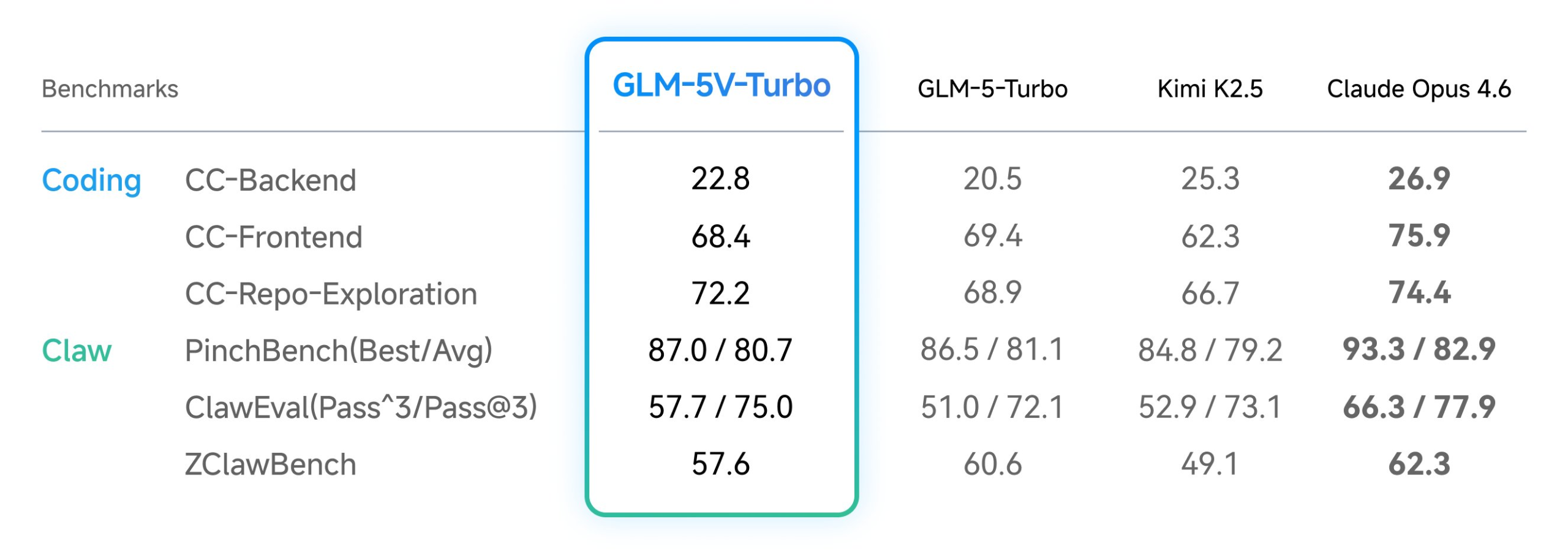

Zhipu AI's GLM-5V-Turbo represents a breakthrough in vision-language models by solving a critical gap: translating visual information directly into executable code. Unlike traditional VLMs that excel at image description but struggle with syntax precision, GLM-5V-Turbo natively combines visual understanding with coding logic, making it essential for developers building agentic systems and automated engineering workflows.

GLM-5V-Turbo introduces a fundamentally different approach to multimodal AI by treating vision and code generation as integrated capabilities rather than separate tasks.

GLM-5V-Turbo combines advanced vision encoding with specialized code generation capabilities, delivering performance metrics optimized for production environments.

What Each Feature Actually Means:

Before

Developers relied on separate tools for image analysis and code generation, requiring manual translation between visual understanding and syntax. Vision-language models excelled at describing images but produced syntactically incorrect or incomplete code. Teams needed multiple validation steps and human review to ensure generated code met production standards.

After

GLM-5V-Turbo handles visual understanding and code generation in a single unified model, producing immediately usable code from images. Autonomous agents can process visual inputs and execute coding tasks without human intervention. Development cycles accelerate dramatically as the image-to-code pipeline becomes direct and reliable.

📈 Expected Impact: Development time for vision-based automation tasks decreases by 60-70% while code quality and production readiness improve significantly. *

For Beginners:

For Power Users:

FAQ

AI Spotlights

Unleashing Today's trailblazer, this week's game-changers, and this month's legends in AI. Dive in and discover tools that matter.

Gemma 4 12B Review: Multimodal AI on Your Laptop

Google Dreambeans Review: AI Cartoon Stories

NVIDIA Nemotron 3 Ultra: 550B MoE LLM Review

Meta AI Agent for Enterprises: Global Launch

Gemini Omni and 3.5: Google's Latest AI Models

Step 3.7 Flash Review: 198B MoE Vision-Language Model

Gemini Spark Review: Google's AI Agent Goes Personal

Microsoft Agent Governance Toolkit Review

Gemini Spark AI Agent Review: Always-On Automation

MAI-Thinking-1 Review: Microsoft's Advanced Reasoning AI

Microsoft Scout Review: OpenClaw-Powered AI Assistant

Microsoft MDASH Review: 100+ AI Agents for Threat Hunting

Google Phone App Fake Call Detection Review

Stable Audio 3 Review: Fast AI Audio Generation

Claude Opus 4.8: Dynamic Workflows & Faster AI

Microsoft 365 Copilot Redesign: 2x Speed Boost

Perplexity Bumblebee: AI Supply Chain Security Scanner

AWS OpenSearch Serverless Review: Enterprise Search Reimagined

OSCAR: 2-Bit KV Cache Quantization for LLMs

StepAudio 2.5 Realtime: AI Voice Model Review

You Might Like These Latest News

All AI NewsStay informed with the latest AI news, breakthroughs, trends, and updates shaping the future of artificial intelligence.

Alphabet's $85B AI Investment Signals Major Shift

Jun 5, 2026

AI Cognitive Fatigue: Work Smarter, Not Harder

Jun 5, 2026

Nvidia Unveils Physical AI Research with Cosmos 3

Jun 5, 2026

Airbnb CEO Launches AI Lab to Build Custom LLMs

Jun 5, 2026

Anthropic's IPO Filing Balances Growth With Responsible AI

Jun 3, 2026

Meta's AI Chatbot Exploited to Hijack Instagram Accounts

Jun 3, 2026

Anthropic IPO Filing: AI Enters Enterprise Utility Phase

Jun 3, 2026

Groq Raises $650M as AI Chip Startup Pivots to Inference

Jun 3, 2026

Coders Ditching AI Tools Risk Quality Issues

Jun 3, 2026

Discover the top AI tools handpicked daily by our editors to help you stay ahead with the latest and most innovative solutions.